Here's the thing: most AI assistants in product support today are essentially fancy search engines. A customer asks "where's my order?" and the AI replies "go to your account page and check order status" - technically correct but practically useless. The customer wanted the answer, not “homework”.

This month, we're changing that. We're introducing Generative Actions - giving our AI assistants the ability to actually act on the customer's behalf: check part compatibility, verify warranty coverage, complete product registration - all inside the conversation, in real time. Multi-step and contextual when required - because products and industries we’re serving demand that.

But that's not all. We've also made Voice Assist significantly more scalable with reusable components, structured knowledge imports, and the option to let it answer beyond brand’s own knowledge if needed.

Let's dig in!

Generative Actions: AI that acts, not just answers

I already mentioned the problem, and you've probably experienced it yourself. When someone calls about the right spare part for their device, they don't want to be told to go check on a website. They want to know exactly what is compatible.

With Generative Actions, our AI assistant can now connect to external systems - Shopify, internal APIs, CRM platforms - and perform actions directly within the conversation.

The assistant collects the information it needs (and it's smart about it: it knows that a product model number should be 8 digits, so it won't proceed with invalid input), calls the relevant system, and explains the result - all conversationally.

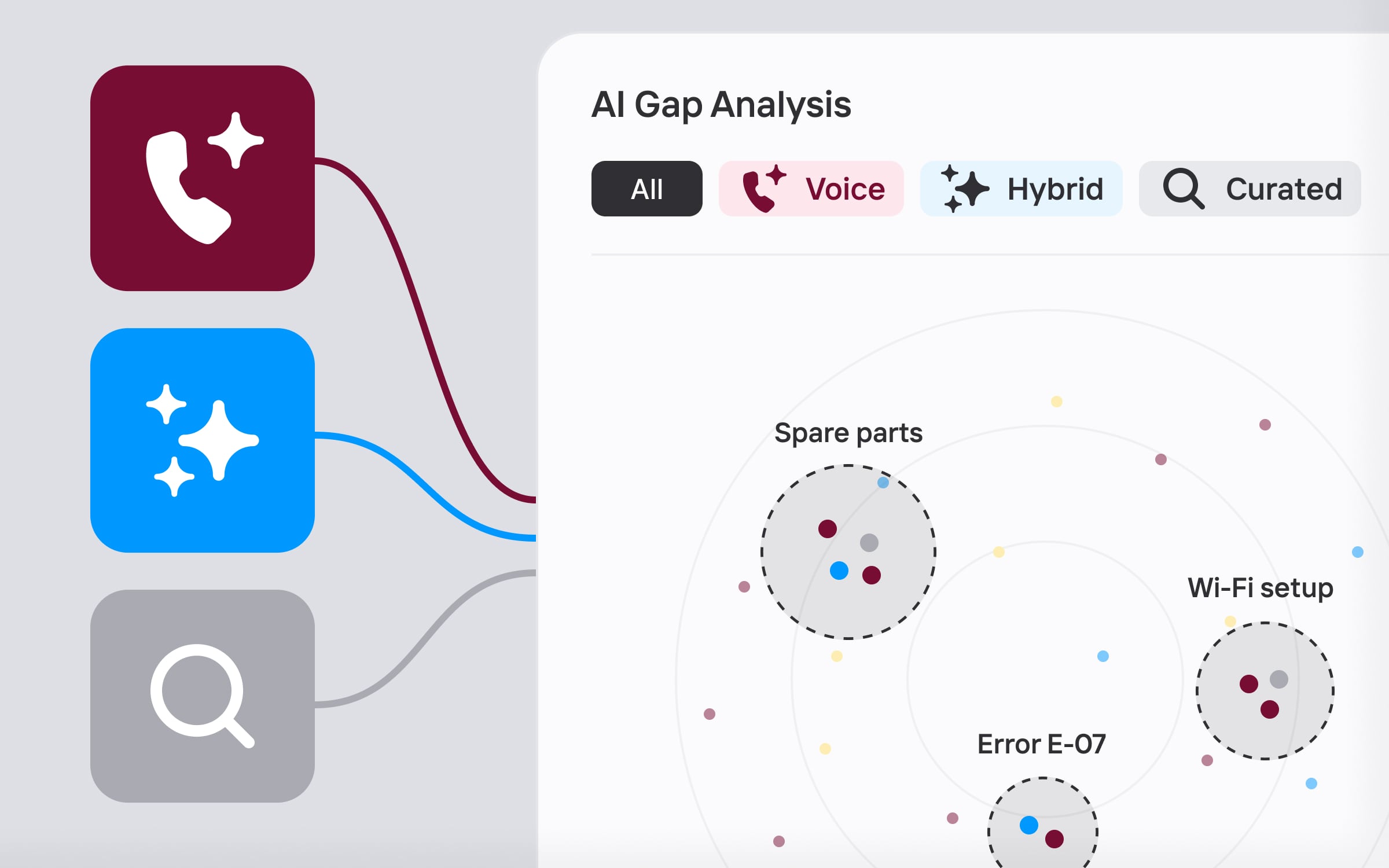

What makes this powerful is that Generative Actions, curated answers, and generative answers from documents all coexist in the same conversation. Users can switch between them seamlessly - it all just flows.

We're taking this further into pre-purchase support. Research shows that 7 out of 10 shoppers abandon before completing a transaction, and for high-involvement purchases like home appliances, abandonment hits 74%. Often it's not about price or trust - it's that people don't know what to pick, get overwhelmed and leave.

Our multimodal AI concierge helps customers navigate exactly this. It asks the right questions, narrows down options, surfaces a comparison, adds the chosen product to cart, and upsells relevant accessories - all when navigating the actual e-commerce shop for you! Intrigued? Schedule a demo with us!

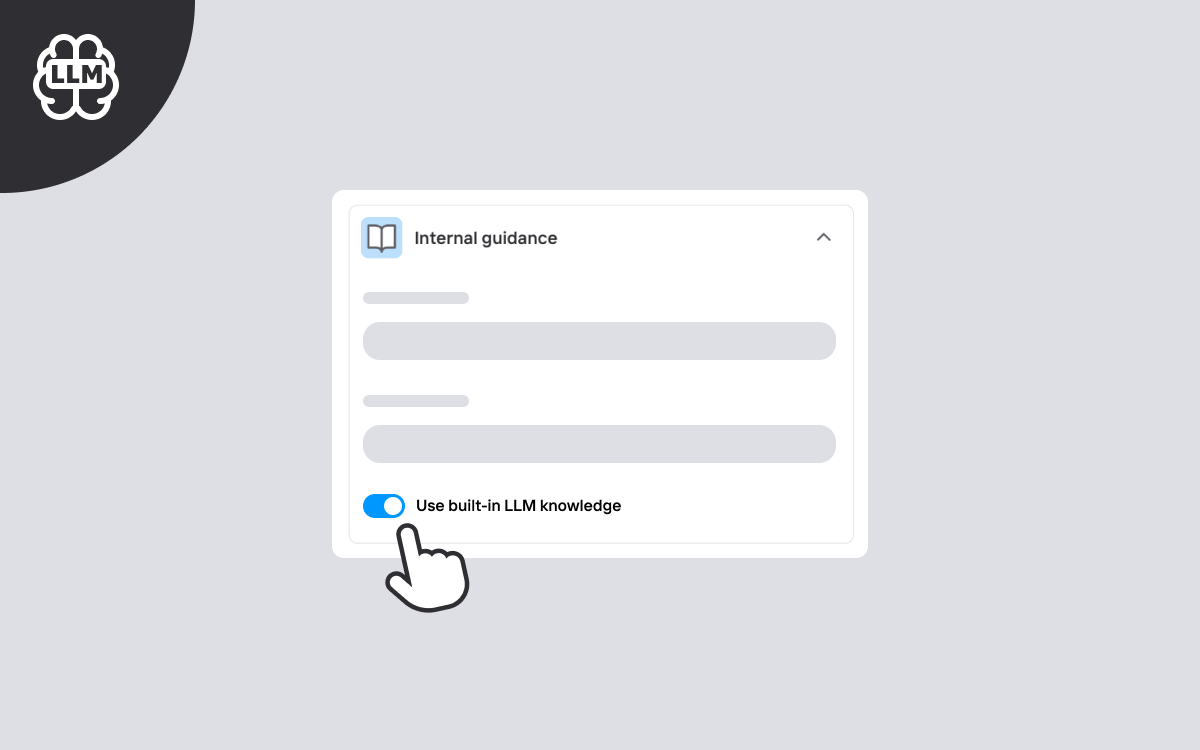

Expanded topic coverage with LLM knowledge

Not every customer question maps neatly to a predefined intent - and it shouldn't have to. Across both Voice Assist and Digital Hybrid experiences, brands can now allow the AI assistant to answer beyond predefined topics using the LLM's built-in knowledge, with appropriate hedging (e.g., "Based on general knowledge…").

This is especially valuable for mass-market brands where there's extensive publicly available knowledge about the product - popular consumer electronics, home appliances, and similar categories. For niche or proprietary domains where LLM knowledge may be less reliable, the feature can stay off. It's disabled by default, so nothing changes unless you choose to enable it.

Voice Assist: build once, reuse everywhere

Updates we’ve lauched this month make Voice Assist dramatically more scalable.

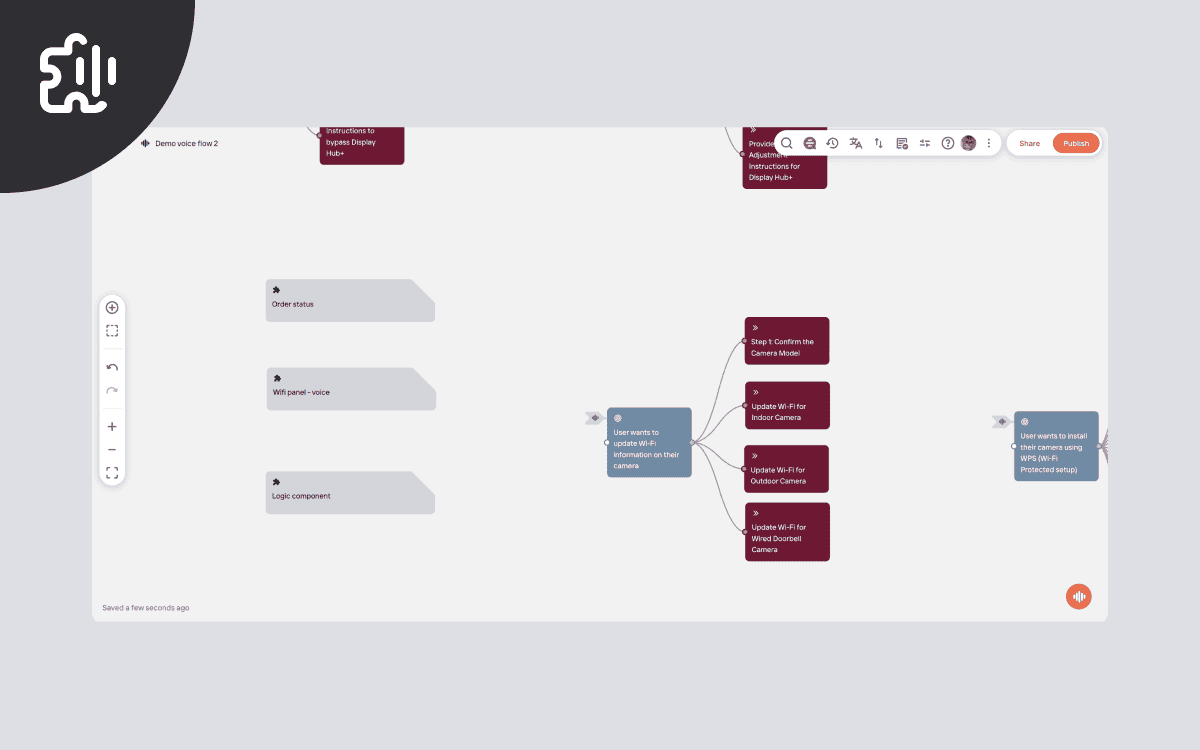

Voice Components

Voice Components let you create reusable blocks of voice content or logic that can be included in any voice flow - just like components already work for digital experiences. You can even create mixed components that combine voice-only nodes (like Intent and Instruction) with logic nodes (Action, Read Data, Write Data), making it easy to reuse integrations you've already built for digital experiences inside your voice assistant. Build once - reuse everywhere.

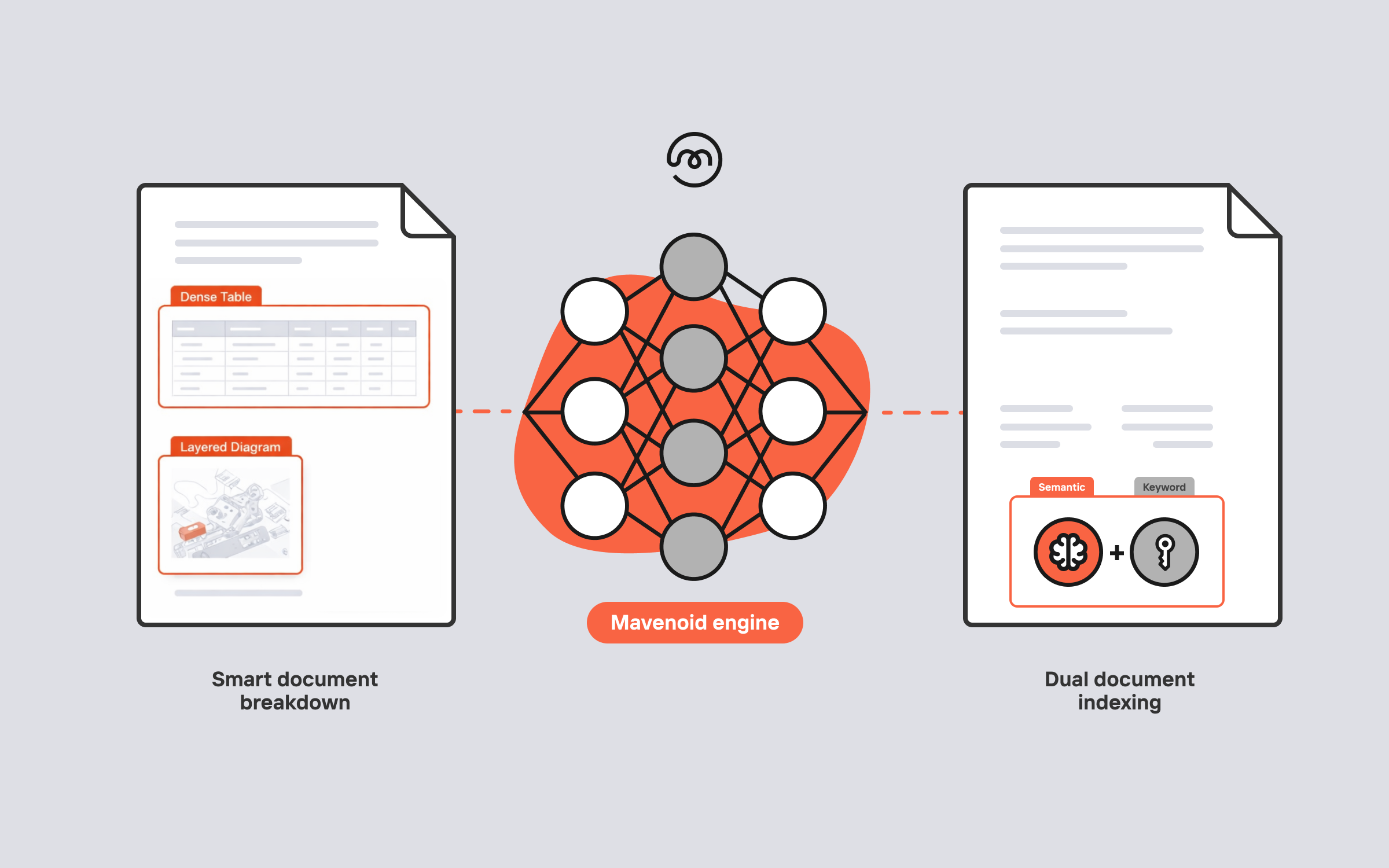

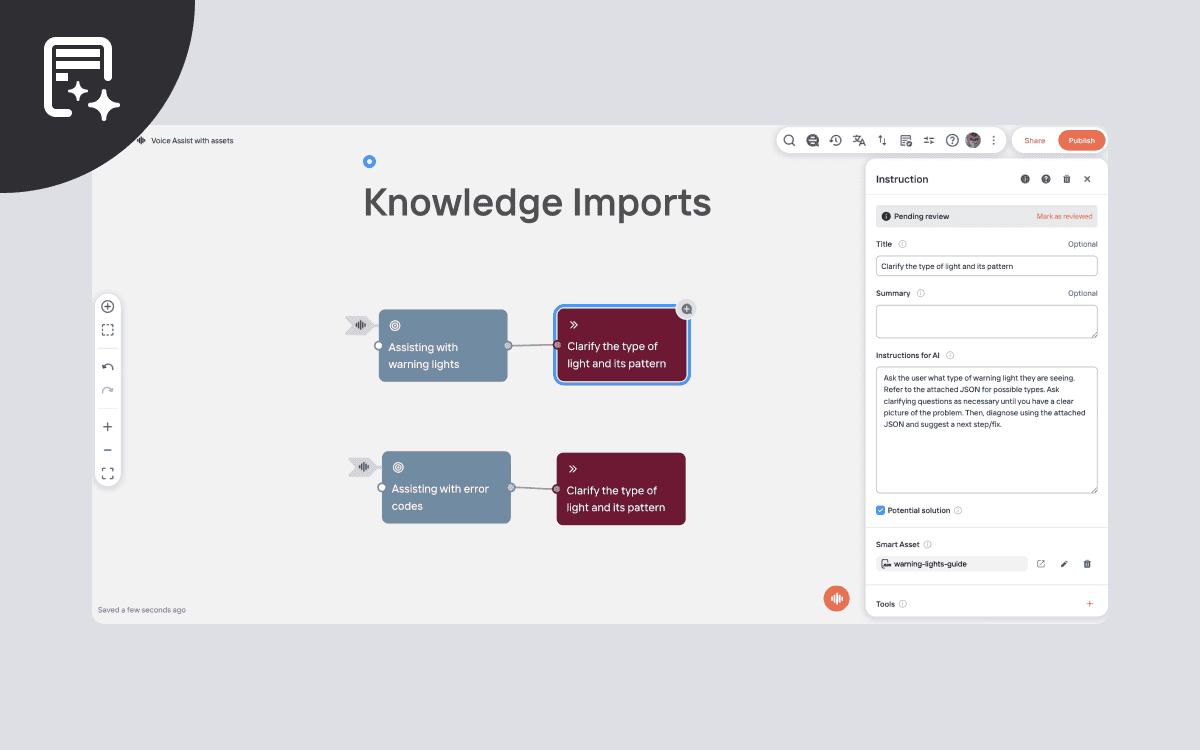

Knowledge Imports

Voice Assist can now reason over structured product knowledge - error codes, troubleshooting guides, specs - directly from JSON files attached as "smart assets" to instructions. The assistant reads and reasons over the imported knowledge naturally: asking clarifying questions about warning light colours or blink patterns, explaining error codes, all sourced from the attached document. Update the file once - and every topic using it picks up the change automatically.

Deeper and more personalized CCaaS integration

For brands running Voice Assist alongside a contact centre platform, this update brings tighter integration through SIP headers.

When a CCaaS system transfers a call to our Voice Assist agent, it often already knows things about the caller - name, account number, language preference. With SIP headers, that context now travels with the call. Our AI agent can use it to personalise the conversation from the very first moment - greeting the caller by name, referencing their account, routing appropriately.

It works both ways. When we transfer a call back (say, escalating to a human agent), we forward the original headers plus any new information collected during the conversation to the CCaaS: customer email, issue category, whatever was gathered. The human agent gets full context without the customer repeating themselves.

More power for your admin teams

Several updates this month give customer admins more direct control.

Voice Answer Settings and Knowledge Imports are now available to admins, so your team can configure voice behaviour, set up knowledge files, and fine-tune the assistant.

Source visibility control lets you decide whether an uploaded document appears as a clickable source link in the digital assistant's answers. Some documents power the AI effectively but aren't intended for end-user consumption. Now you can use them as knowledge sources while keeping them hidden from the assistant's responses - just uncheck a box.

What's next

We're continuing to push on agentic capabilities for both digital and voice, expanding what our assistants can do, not just answer. If you'd like to see any of these capabilities in action, reach out to your Mavenoid contact or request a demo.

Until next time!